When using inferential statistics, we commonly use two paradigms to understand our results: null hypothesis significance testing (NHST, aka statistical significance) and effect sizes (aka the quantitative difference between groups). NHST determines whether there is a difference between groups, and effect sizes describe the difference between groups. While there is a movement away from NHST towards effect sizes, the current best practice typically includes both. This post explains how to interpret and calculate effect sizes.

Effect sizes are calculated from the same base numbers as NHST: the error between and within groups. NHST is evaluated based on p-values (e.g., p < .05). Technically, p-values cannot be used as an indicator of effect size because they are directly linked to alpha values and NHST. Thus, a p-value cannot be used to describe the likelihood that a result is due to random chance (even though that’s basically what it means) because of the NHST framework for which it is used.

There are two types of effect sizes that are commonly used.

Difference between Groups

This type of effect size describes the difference between groups’ means using a measure of variance (typically standard deviation) as the unit. For example, if you were comparing two groups that had a means of 6 and 12, the effect size would be much larger if the standard deviation were 2 than if it were 6. If the standard deviation were 2, the group that had a mean of 12 is 3 standard deviations higher than the group that had a mean of 6. This means that 99% of participants in the higher group scored better than the average participant in the lower group. If the standard deviation were 6, the difference between group is 1 standard deviation. This means that only 67% of participants in the higher group scored better than the average participant in the lower group.

Cohen’s d (for t-test) and f (for ANOVA), listed in the inferential statistics post, are both effect sizes that describe the difference between groups.

Proportion of Variance Accounted for by Treatment (PVAT)

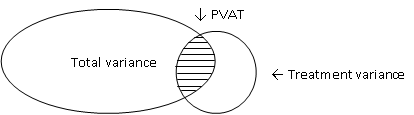

This type of effect size describes the proportion of total variance accounted for by the treatment. For example, if you had a value of .25, then 25% of the variance between groups can be attributed to the treatment. PVAT values are typically pretty low in educational research because many other factors affect learners’ performance, e.g., learning strategy, motivation, and time of day. In the diagram below, all of these factors are part of the total variance, including PVAT. Treatment variance includes PVAT and measurement error.

The common statistics for PVAT in ANOVA are η2 (eta squared) and ω2 (omega squared). η2 tends to be biased upward, whereas ω2 tends to be more conservative. Partial η2 and ω2 are for estimating the unique variance caused by one independent variable (i.e., doesn’t include overlap from other independent variables). Thus, in a one-way ANOVA (with only one independent variable), partial η2 or ω2 is the same as non-partial. The common statistic for PVAT in regression is R2 (the value of R squared)

Calculating Effect Sizes

If you are using SPSS to run inferential statistics, here are the formulas that you need to calculate effect sizes. Other analysis packages automatically include many of these options. There are also calculators online, but you’ll still need the values listed below.

| Inferential Test | Effect Size | Type of ES | How to Calculate | Large Effect | Medium Effect | Small Effect |

| t (t-test) | d | Diff. | |M1 – M2| / √(SD2pooled) | .8 | .5 | .2 |

| F (ANOVA) | f | Diff. | √((dftreatment*F) / N) | .4 | .25 | .1 |

| t or F | η2 | PVAT | SStreatment / SStotal | ~.2 | ~.1 | ~.05 |

| t or F | Partial η2 | PVAT | Calculated by SPSS | ~.2 | ~.1 | ~.05 |

| t or F | ω2 | PVAT | (SStreatment – dftreatment*MSerror) / (SStotal + MSerror) | ~.2 | ~.1 | ~.05 |

| t or F | Partial ω2 | PVAT | (F – 1) / (F + ((dferror + 1)/ dftreatment)) | ~.2 | ~.1 | ~.05 |

| R | R2 | PVAT | Calculated by SPSS | ~.2 | ~.1 | ~.05 |

M = mean

SD = standard deviation

df = degrees of freedom (treatment outlined in blue, error outlined in green)

N = total number of participants

SS = sum of squares (treatment outlined in red, total outlined in purple)

MS = mean squares (error outlined in orange)

To view more posts about research design, see a list of topics on the Research Design: Series Introduction.

Pingback: Research Design: Series Introduction | Lauren Margulieux

Pingback: Research Design: Inferential Statistics for Causal Questions | Lauren Margulieux

Pingback: Research Design: What Statistical Significance Means | Lauren Margulieux